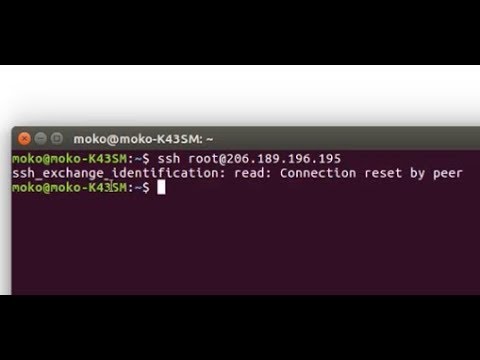

I'm getting this error when trying to ssh into my droplet: `ssh_exchange_identification: read: Connection reset by peer` I was able to login an hour ago, not sure what is going on.

You can allow ssh connection by firewall User-interface (some providers allow that) or If you have any alternative method to login (Ex. Digitalocean provide a console button ) you can run below command sudo ufw allow ssh sudo ufw allow 22 – BSB Feb 8 at 5:41. Sshexchangeidentification: read: Connection reset by peer. December 24, 2018. Sshexchangeidentification: read: Connection reset by peer.

- srinathhsAugust 30, 2014Even I am facing constant Connection reset problems in singapore region. IT occurs when using VIM and editing code.Not sure what is going on.I will add the -vv option and update the results.

- bdoesborgJanuary 13, 2015Happened to me on a droplet that ran out of memory.

- nathanscherneckAugust 20, 2017just want to add that I had the same error being generated on file uploads. turned out I was running out of memory too. resized my droplet and haven't had a recurrence.

- ReneMurroSeptember 17, 2018Fixed it for me as well.Anybody know why that is the case? As we don't really have much going on on the Server

Join GitHub today

GitHub is home to over 36 million developers working together to host and review code, manage projects, and build software together.

Sign upHave a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Comments

commented Jun 20, 2017

Seen this flake a few times now: Is there anything we can do to make this stage more robust? (p2 because I haven't seen it in the merge queue, may be specific to our extended job?) |

added area/testskind/test-flakepriority/P2 labels Jun 20, 2017

commented Jun 20, 2017

@jim-minter fyi |

commented Jun 20, 2017

The failure was on server-side when we were using scp:Not clear what killed sshd on the target host, or if the host just died, but it looks like total failure on that end. Not sure there's much more we can do. |

commented Jun 20, 2017

is it worth adding a retry loop? |

commented Jun 20, 2017

Looks like every time we tried to hit the machine for the rest of the job also failed. I'm not sure we need to be well-formed in case the VM falls over entirely like that. If we see this more than once and we have a reason to think re-try would help, we could try to add it. I think scp and ssh both emit a different exit code when they fail versus when their remote stuff fails. |

commented Jun 20, 2017

ok, i'll close for now but i do think i've seen it at least twice in the last few days. |

commented Jun 28, 2017

Looks like this flake is showing up again: https://ci.openshift.redhat.com/jenkins/job/test_branch_origin_extended_image_ecosystem/113 |

commented Jul 5, 2017

changed the titleStage failure: use a ramdisk for etcdJul 5, 2017

referenced this issue Sep 19, 2017

ClosedFINISHED STAGE: FAILURE: RECORD THE STARTING METADATA #16428

commented Dec 14, 2017

commented Dec 14, 2017

/shrug Most notably, Driver Booster 6 adds a brand new Boost module to bring users the best gaming experience by boosting the system with 1-click. Driver Booster 6 also enables intelligent auto driver update service while the system is idle to save a lot of users' valuable time. Download driver booster pro crack. It helps users detect all outdated/faulty/missing drivers and game components and keep them always up-to-date. |

commented Dec 14, 2017

commented Dec 14, 2017

@dmage there is really nothing we can do about this -- not sure reporting every AWS API flake is worth your time |

commented Dec 15, 2017

@stevekuznetsov if we're seeing this this much, perhaps the jobs themselves need to detect this and be rerun? |

commented Dec 15, 2017

If we were seeing it much more commonly, maybe. |

commented Mar 15, 2018

Issues go stale after 90d of inactivity. Mark the issue as fresh by commenting /remove-lifecycle stale.Stale issues rot after an additional 30d of inactivity and eventually close. Exclude this issue from closing by commenting /lifecycle frozen.If this issue is safe to close now please do so with /close./lifecycle stale |

commented Apr 14, 2018

Stale issues rot after 30d of inactivity. Mark the issue as fresh by commenting /remove-lifecycle rotten.Rotten issues close after an additional 30d of inactivity. Exclude this issue from closing by commenting /lifecycle frozen.If this issue is safe to close now please do so with /close./lifecycle rotten /remove-lifecycle stale |

added lifecycle/rotten and removed lifecycle/stale labels Apr 14, 2018

commented May 15, 2018

Rotten issues close after 30d of inactivity. Reopen the issue by commenting /reopen.Mark the issue as fresh by commenting /remove-lifecycle rotten.Exclude this issue from closing again by commenting /lifecycle frozen./close |

Sign up for freeto join this conversation on GitHub. Already have an account? Sign in to comment